Why Human Hackers Still Win in the AI Era | Intigriti CEO Insights 2026 New

In this article, I’ve spent over a decade building Intigriti around one core belief: the best defence against attackers who think creatively is people who think creatively too. AI doesn’t change that. If anything, the latest data from 2025 and 2026 makes the case stronger than ever.

This piece is honest about both sides. AI is genuinely useful — and it is genuinely limited. Understanding that distinction isn’t just philosophy. It’s the difference between a security programme that works and one that leaves you exposed.

The Beating Heart of Bug Bounty

In the ten-plus years of building Intigriti, we’ve lived through a lot of “this changes everything” moments. COVID-19 rewrote how security teams hire and trust people they’ve never met in person. The side-hustle economy turned ethical hacking into a legitimate, skill-based career path for thousands of talented people around the world. Regulation arrived. Customer expectations shifted. The threat landscape matured. And the researcher community grew into a truly global workforce.

Through all of it, one thing held constant: the hackers were the heart of this industry. Not the platforms. Not the tooling. The people who sit down with nothing but curiosity and a laptop and find the vulnerability your $2 million security stack missed.

AI is the next big shift — and it’s arguably bigger than anything that came before it. But here’s what hasn’t changed: it does not matter whether an AI tool, a nation-state actor, or an opportunistic attacker finds a vulnerability. What matters is whether you find it first. Human creativity has always been the reason bug bounty exists. AI makes that work faster and sharper. It does not make the human redundant.

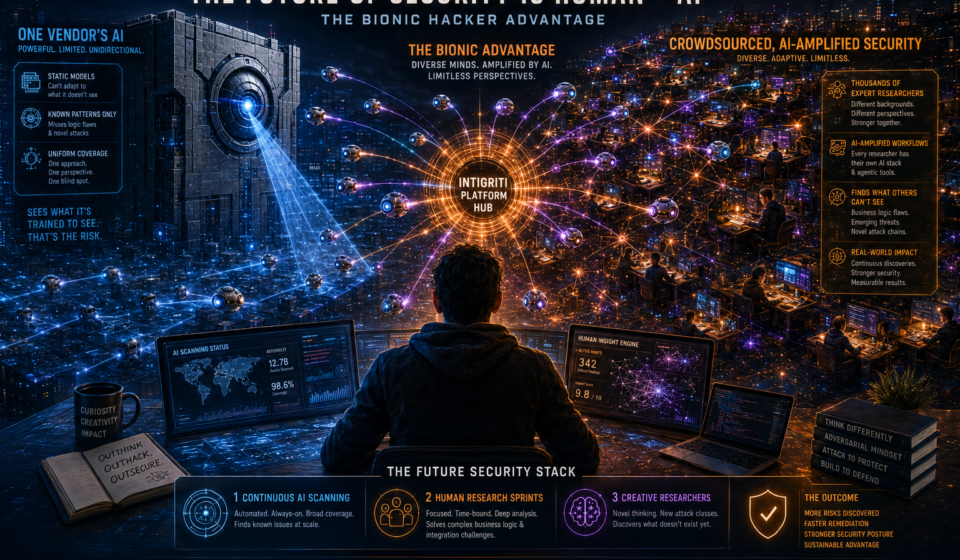

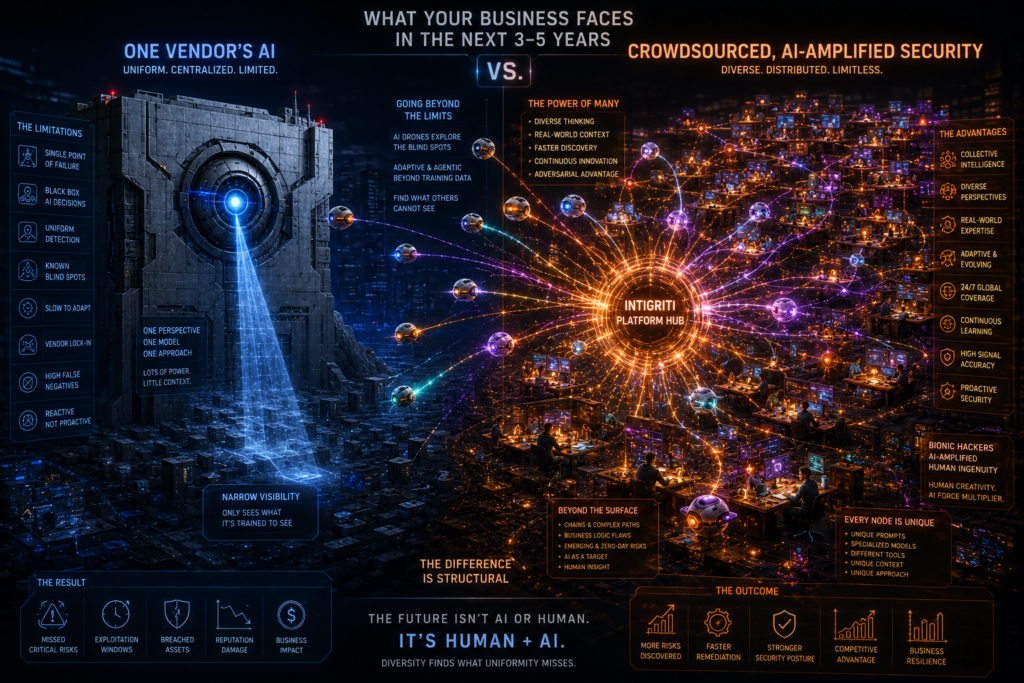

“One vendor’s AI versus thousands of hackers amplified by AI. The second approach finds things the first structurally cannot.”

— Stijn Jans, CEO & Founder, Intigriti

What the 2025–2026 Data Actually Shows

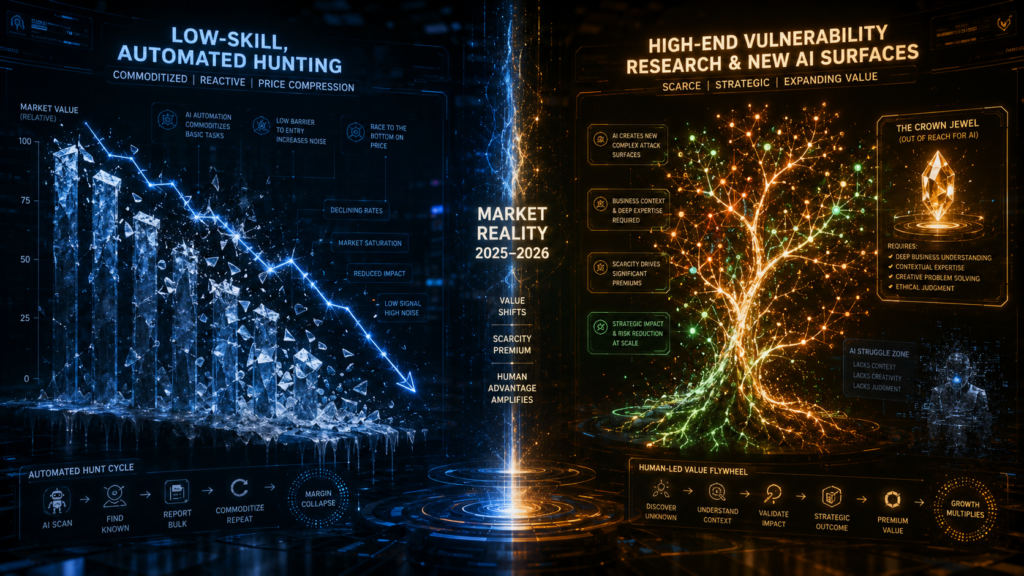

Let’s stop speculating and look at the numbers. The bug bounty market did not collapse when AI arrived. It grew — and the data tells a nuanced story that most AI-hype headlines miss entirely.

What these numbers tell us is not that AI is killing bug bounty. They tell us the opposite: the market is expanding precisely because AI has created entirely new attack surfaces — and human researchers are the ones discovering and exploiting them responsibly. What is shrinking, as one analysis put it bluntly, is “the return on low-skill, automated, repetitive hunting.” That’s a feature, not a bug.

Key market signal for 2026

Bugcrowd predicts that bounty rewards for high-end vulnerability research will increase in 2026 — not decrease. Their reasoning: AI can detect trivial misconfigurations, but the “crown jewel compromise paths requiring deep understanding of business operations still depend entirely on human researchers, who are in short supply.

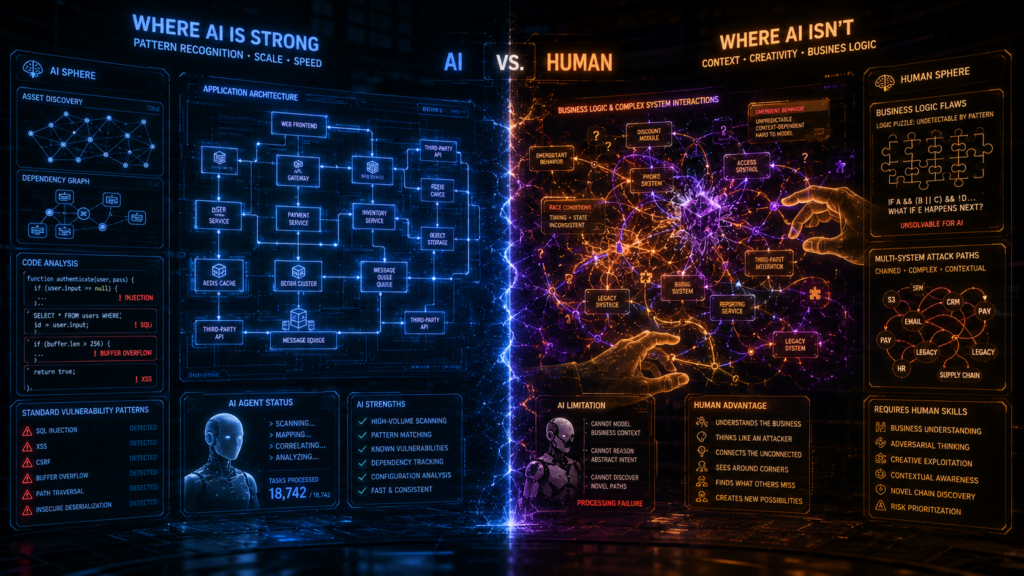

Where AI Is Strong — And Where It Isn’t

To have an honest conversation about AI in security, you need to be precise. Sweeping claims in either direction — “AI will find everything” or “AI is useless” — are both wrong. Here is what the evidence actually shows.

| Task | AI capability today | Best suited to |

|---|---|---|

| Surface mapping & asset discovery | Excellent — continuous, scalable | AI |

| Known vulnerability pattern recognition | Strong, especially on source code review | AI |

| Dependency & configuration scanning | Strong — fast and consistent | AI |

| Report summarisation & triage support | Increasingly useful, still needs oversight | Both |

| Interacting with live, running systems | Still limited — poor state management | Human |

| Business logic flaws | Very weak — requires contextual understanding | Human |

| Chained, multi-system attack paths | Unable to build and maintain state reliably | Human |

| Novel vulnerability classes in emerging tech | AI can assist, but human insight leads | Human |

| Adversarial creativity & lateral thinking | Cannot replicate — pattern matching ≠ creativity | Human |

Current large language models are genuinely good at reasoning over source code, spotting known vulnerability patterns, and flagging insecure constructs. But they remain far less effective at understanding how an application behaves in the real world — adapting when something unexpected happens, or understanding what a product does as opposed to what it technically contains. That gap is closing, but it remains significant in 2026.

The UK AI Security Institute’s research confirms this: while AI cyber-task capability is improving quickly (with the length of tasks frontier systems can complete unassisted doubling roughly every eight months), top-tier researchers consistently outperform AI on anything requiring real-world context or multi-system reasoning.

The Rise of the Bionic Hacker

This isn’t the first time the security industry has been here. When automated scanners arrived, people predicted the end of manual penetration testing. The opposite happened: scanners raised the floor, which made the ceiling matter more. Good researchers got sharper because they could skip tedious, repetitive work. New researchers gained entry because the tool lowered the barrier to finding real issues.

AI is the same pattern, just at a different magnitude.

“We’ve entered the era of the ‘bionic hacker’ — human researchers using agentic AI to collect data, triage, and advance discovery.”

— Crystal Hazen, Senior Bug Bounty Program Manager, HackerOne

The bionic hacker doesn’t fear AI. They use it aggressively. AI handles the reconnaissance, the initial scan, the pattern matching, and early-stage triage. The human applies what AI cannot: the adversarial creativity that comes from genuinely understanding what a product does, what a company cares about, and where the gaps between intention and implementation tend to open up.

At Intigriti, this is exactly what we see. The researchers achieving the highest-impact findings in 2025 and 2026 aren’t those who rely purely on manual intuition, nor those who run automated tools and submit whatever comes back. They’re the ones who’ve built a personal AI stack that amplifies their thinking — and they use it to go deeper, not wider.

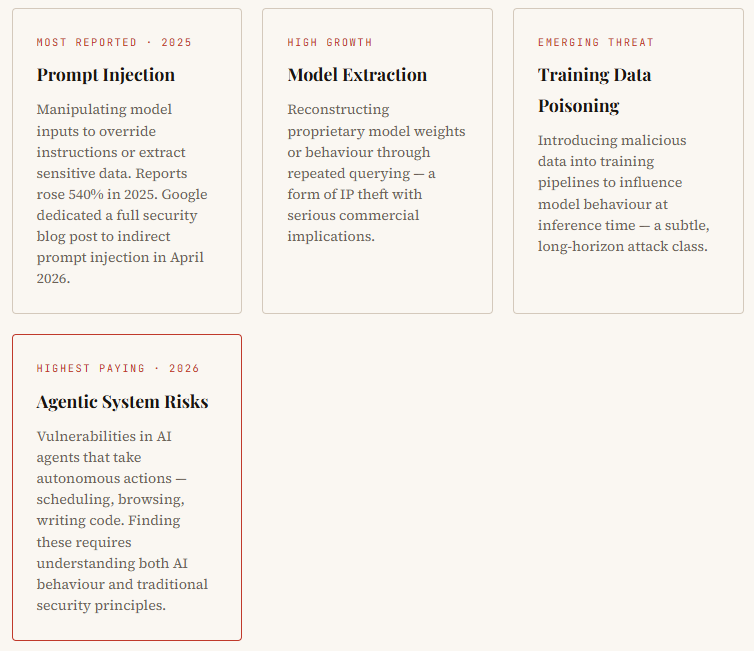

AI as a Target: The New Security Frontier

Here is a development many people in traditional security are still catching up to: AI systems are now a primary attack surface in their own right. And the vulnerability classes they introduce are unlike anything that came before.

New vulnerability classes unique to AI systems

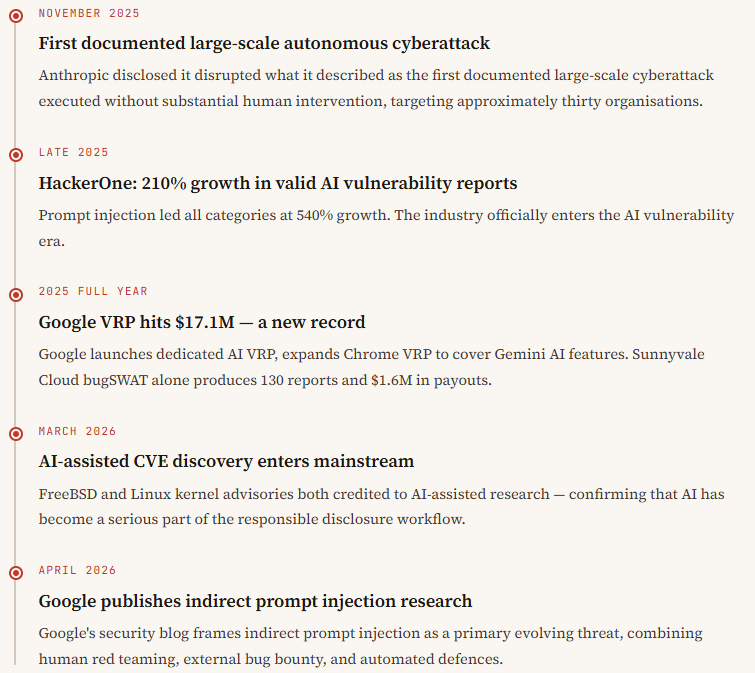

Real-world signal: AI-assisted vulnerability discovery

In March 2026, FreeBSD published an advisory for a remote code execution vulnerability in RPCSEC_GSS, credited to a researcher using Claude, Anthropic’s AI model. In April 2026, a Linux kernel NFS replay-cache heap overflow was similarly linked to AI-assisted discovery. These aren’t hypotheticals. AI-augmented research is already producing critical findings in production systems.

Google recognised this shift and launched a dedicated AI Vulnerability Reward Programme in 2025 — a standalone programme with clear reward tiers specifically for AI-related findings, separate from its traditional web bug bounty. They’ve extended their Chrome VRP to include vulnerabilities in AI-powered features like Gemini integrations. The AI bug bounty market now offers payouts ranging from $200 for entry-level findings to $100,000 for exceptional discoveries, with the top programmes collectively paying tens of millions of dollars in 2025.

Key AI security milestones: 2025–2026

What Your Business Faces in the Next 3–5 Years

The security market is about to force a very practical choice on every CISO and security leader. You can buy a single AI security product. Or you can work with a large, diverse community of researchers — each running their own AI stack, their own models, their own prompts, their own creative angle — on a platform like Intigriti.

Think carefully about that comparison. One vendor’s AI versus thousands of skilled humans, each amplified by AI. The second approach finds things that the first structurally cannot. Diversity of tooling combined with diversity of thinking is the security moat.

Bugcrowd 2026 prediction

“In 2026, leaders will be able to predict security maturity and resilience by looking at programme measures like how competitive researcher reward payouts are, what programme participation looks like, and how many researchers hunt on programmes long term.”

In other words: the quality of your bug bounty programme is becoming a leading indicator of your overall security posture — not just a nice-to-have.

Businesses that fail to understand this will buy AI-only security solutions and learn the hard way that real attackers are still human — and they are using AI too. The asymmetry is brutal: defenders must protect everything; attackers only need to find one gap. A diverse, AI-amplified research community is currently the best systematic answer to that problem.

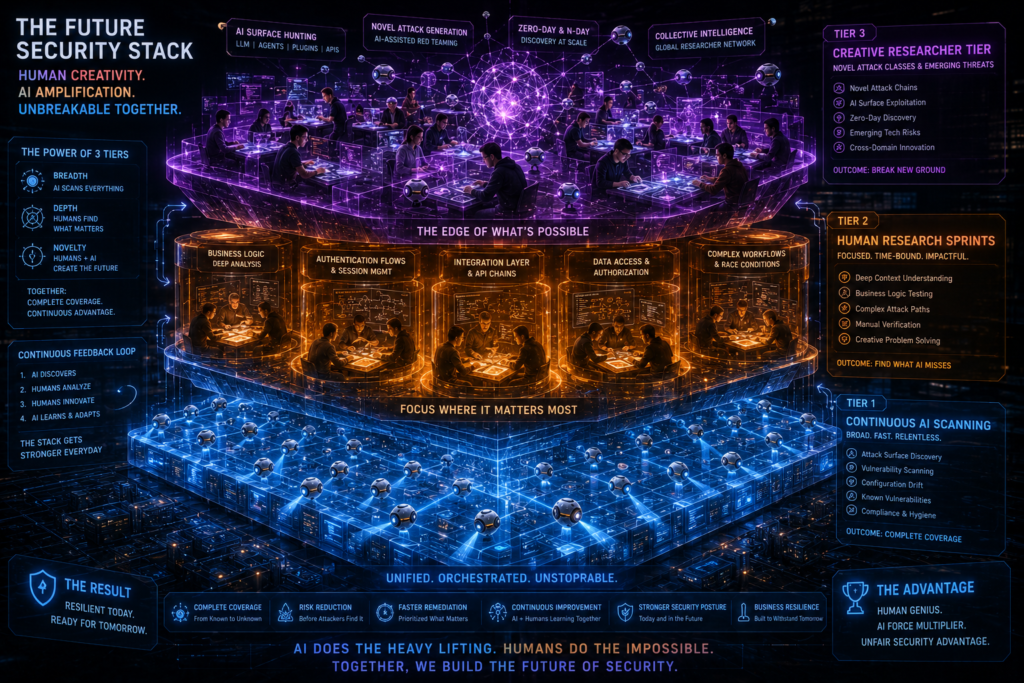

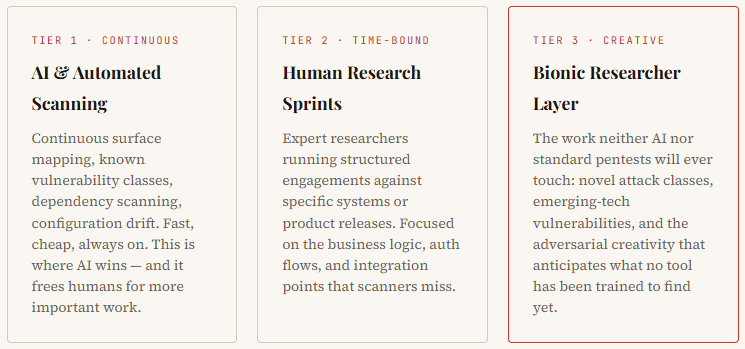

The Future Security Stack: Three Tiers

In three to five years, the most effective security programmes will likely operate with explicit, layered tiers — not because this is a nice framework to present to a board, but because each tier catches genuinely different classes of risk.

Customers who understand and invest in all three tiers will spend less and find more. The platforms that win are the ones that hold the human layer sacred — treating every product decision as an opportunity to amplify the researcher, not replace them.

That’s not marketing language. It’s an architectural choice. And that is what we are building Intigriti to be.

Key Takeaways

- Bug bounty is not dying — total payouts reached $81M in 2025 and are still growing.

- AI has created entirely new attack surfaces (prompt injection, model extraction, agentic risks), opening new revenue opportunities for ethical hackers.

- 82% of researchers already use AI in their work — the “bionic hacker” is not the future, it is the present.

- AI excels at breadth; humans remain essential for depth, creativity, and context-dependent findings.

- Businesses that buy AI-only security solutions leave themselves exposed to threats that only human creativity can surface.

- The winning security model layers continuous AI scanning, human research sprints, and a creative researcher tier.

- High-end vulnerability research rewards will increase in 2026 — not decrease — as the talent capable of finding “crown jewel” paths becomes scarcer.

Frequently Asked Questions

Straight answers to the questions we hear most often about AI and bug bounty.

No — at least not for the work that matters most. AI is excellent at automating surface-level, repetitive vulnerability scanning. But it cannot replicate the creative, context-driven reasoning human researchers use to find business-logic flaws, chained attack paths, and vulnerabilities in new technology categories. Bugcrowd confirmed in late 2025 that “crown jewel compromise paths” — the findings that cause the most damage — still require deep human understanding of how a business actually works.

About 82% of ethical hackers now use AI tools in their daily workflows, according to Bugcrowd’s 2026 research. In practice, researchers use AI to automate reconnaissance, reason over source code, summarise lengthy documentation, help generate targeted wordlists, and triage findings. The best researchers — what HackerOne calls “bionic hackers” — use AI to eliminate repetitive work, so they can spend more time on the high-value, creative analysis that actually leads to critical findings.

Valid AI-related vulnerability reports grew 210% in 2025 (HackerOne), with prompt injection reports surging 540%. Beyond AI-specific bugs, Bugcrowd reported broken access control critical vulnerabilities up 36%, API vulnerabilities up 10%, network vulnerabilities doubling, and hardware vulnerabilities rising 88%. The emergence of AI as a product surface has opened an entirely new set of vulnerability classes — including model extraction, training data poisoning, and adversarial inputs — that barely existed as categories three years ago.

Absolutely — and earning potential is increasing for skilled researchers. HackerOne paid out $81 million in 2025 across 580,000+ validated vulnerabilities. Google’s VRP paid a record $17.1 million, up roughly 40% year-on-year. AI bug bounty programmes now offer $200–$100,000 per finding, with 2026 budgets larger than 2025. The key shift: returns on low-skill, automated, repetitive hunting are shrinking, while rewards for deep, creative, high-impact research are growing. Researchers who specialise in AI security are particularly well-positioned for the next five years.

The term, coined by HackerOne, describes a human security researcher who uses agentic AI systems to dramatically amplify their capabilities. A bionic hacker delegates data collection, surface scanning, report triage, and early pattern-matching to AI — while applying their own creative, strategic thinking to the problems AI cannot solve: understanding business context, chaining vulnerabilities across systems, and finding attack paths that require genuine adversarial imagination. This approach consistently outperforms both pure manual testing and fully automated scanning.

AI products have created several vulnerability categories that didn’t meaningfully exist before: prompt injection (manipulating model inputs to override instructions or extract data), indirect prompt injection (embedding malicious instructions in data that AI agents process), model extraction (reconstructing proprietary model behaviour through repeated queries), training data poisoning (corrupting model behaviour via malicious training inputs), adversarial inputs (inputs crafted to cause misclassification or unsafe outputs), and agentic system risks (vulnerabilities in AI agents that take autonomous real-world actions). Google published a dedicated advisory on indirect prompt injection in April 2026.

The most effective approach is a three-tier model: Tier 1 — continuous AI and automated scanning for known vulnerability classes and configuration drift; Tier 2 — time-bound human research engagements focused on business logic, authentication, and integration-layer vulnerabilities; Tier 3 — a creative researcher layer (a diverse, global bug bounty programme) for the novel attack classes that neither AI nor standard pentests are designed to find. Businesses that rely solely on AI-only solutions risk leaving critical, human-discoverable vulnerabilities permanently in place.

The core advantage of crowdsourced security is diversity of both tooling and thinking. A single AI security product applies one vendor’s model, trained on one dataset, with one set of assumptions. A platform like Intigriti gives you access to thousands of researchers, each running their own AI stack, their own prompts, and their own creative methodology. That diversity catches things that any single tool — however sophisticated — will structurally miss. The two approaches aren’t in competition; the most resilient programmes use both.